This post is a part of an ongoing series comparing the performance of Cloudflare Workers with other Serverless providers. In our past tests we intentionally chose a workload which imposes virtually no CPU load (returning the current time). For these tests, let's look at something which pushes hardware to the limit: cryptography.

tl;dr Cloudflare Workers are seven times faster than a default Lambda function for workloads which push the CPU. Workers are six times faster than Lambda@Edge, tested globally.

Slow Crypto

The PBKDF2 algorithm is designed to be slow to compute. It's used to hash passwords; its slowness makes it harder for a password cracker to do their job. Its extreme CPU usage also makes it a good benchmark for the CPU performance of a service like Lambda or Cloudflare Workers.

We've written a test based on the Node Crypto (Lambda) and the WebCrypto (Workers) APIs. Our Lambda is deployed to with the default 128MB of memory behind an API Gateway in us-east-1, our Worker is, as always, deployed around the world. I also have our function running in a Lambda@Edge deployment to compare that performance as well. Again, we're using Catchpoint to test from hundreds of locations around the world.

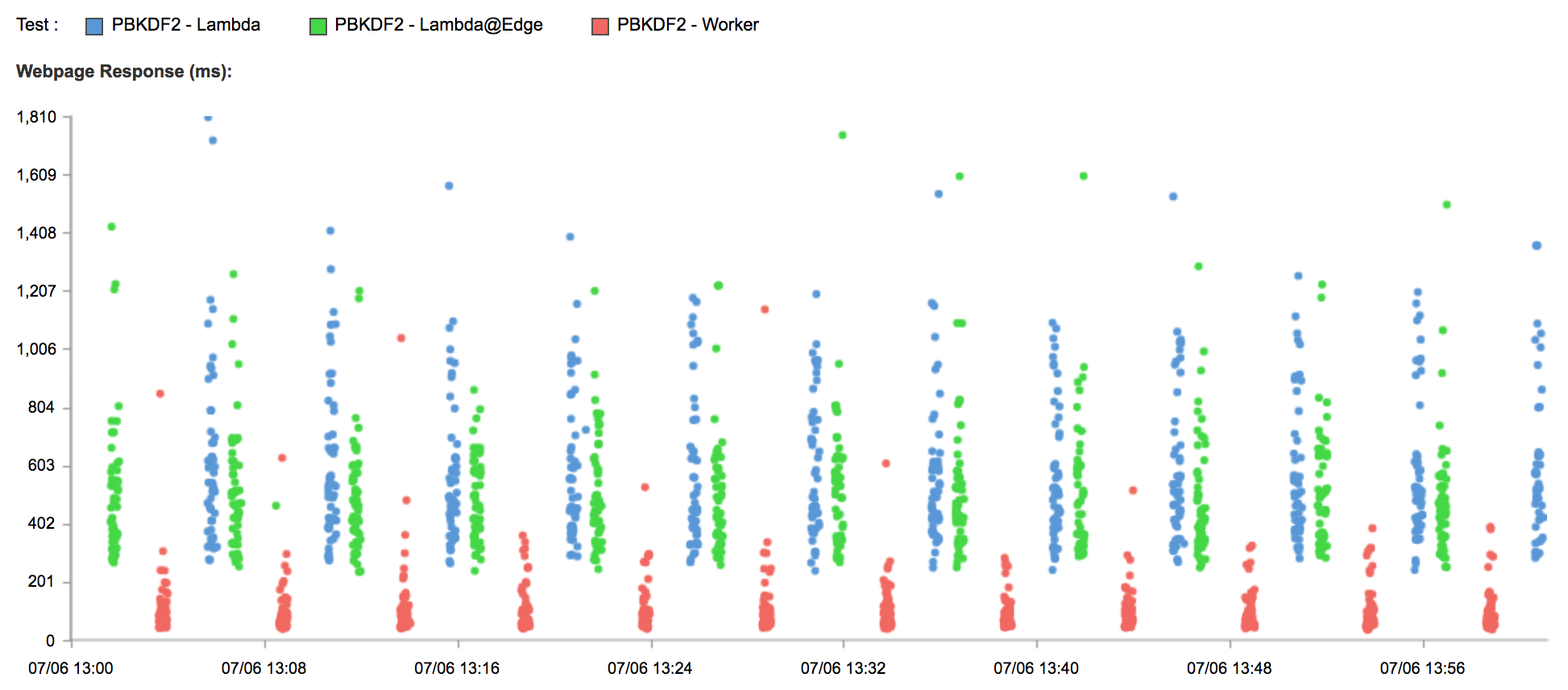

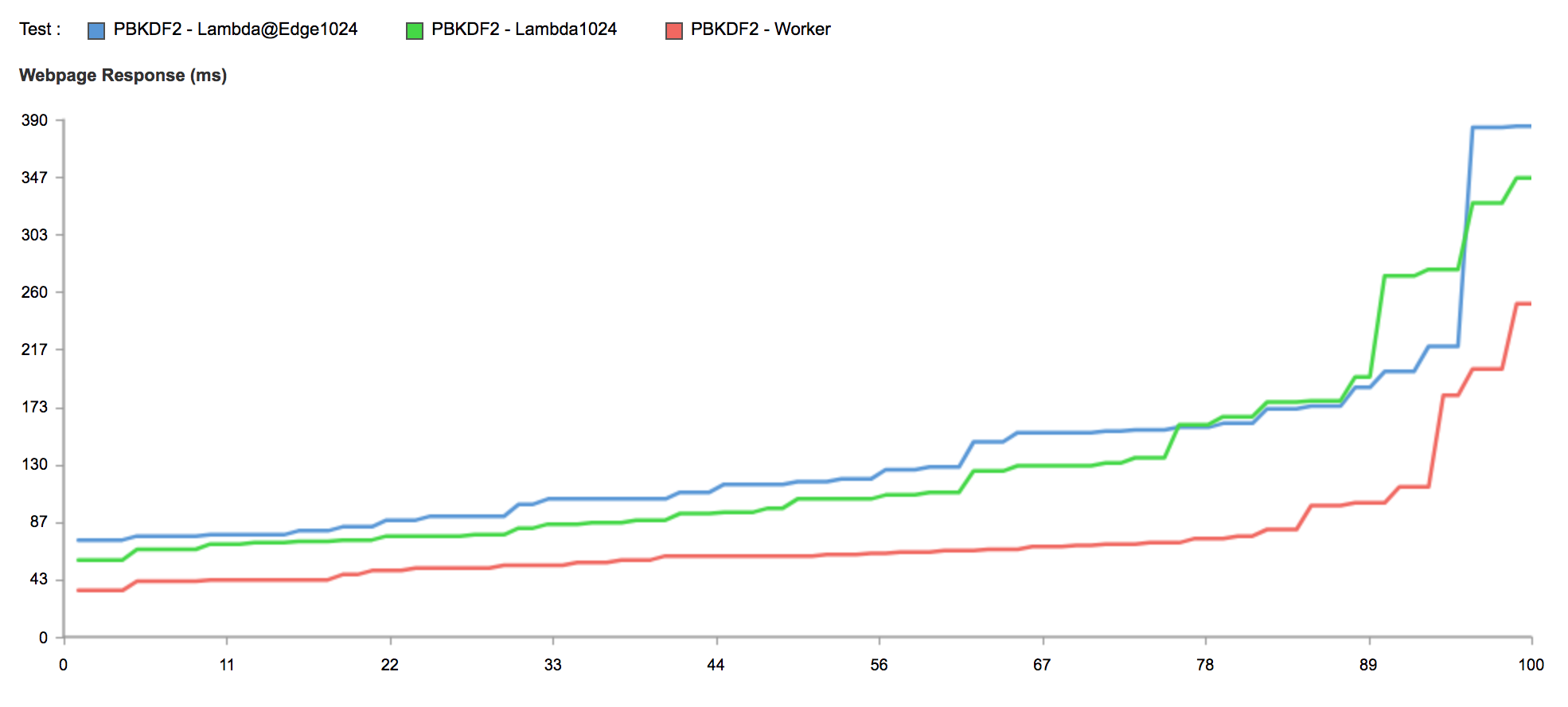

And again, Lambda is slow:

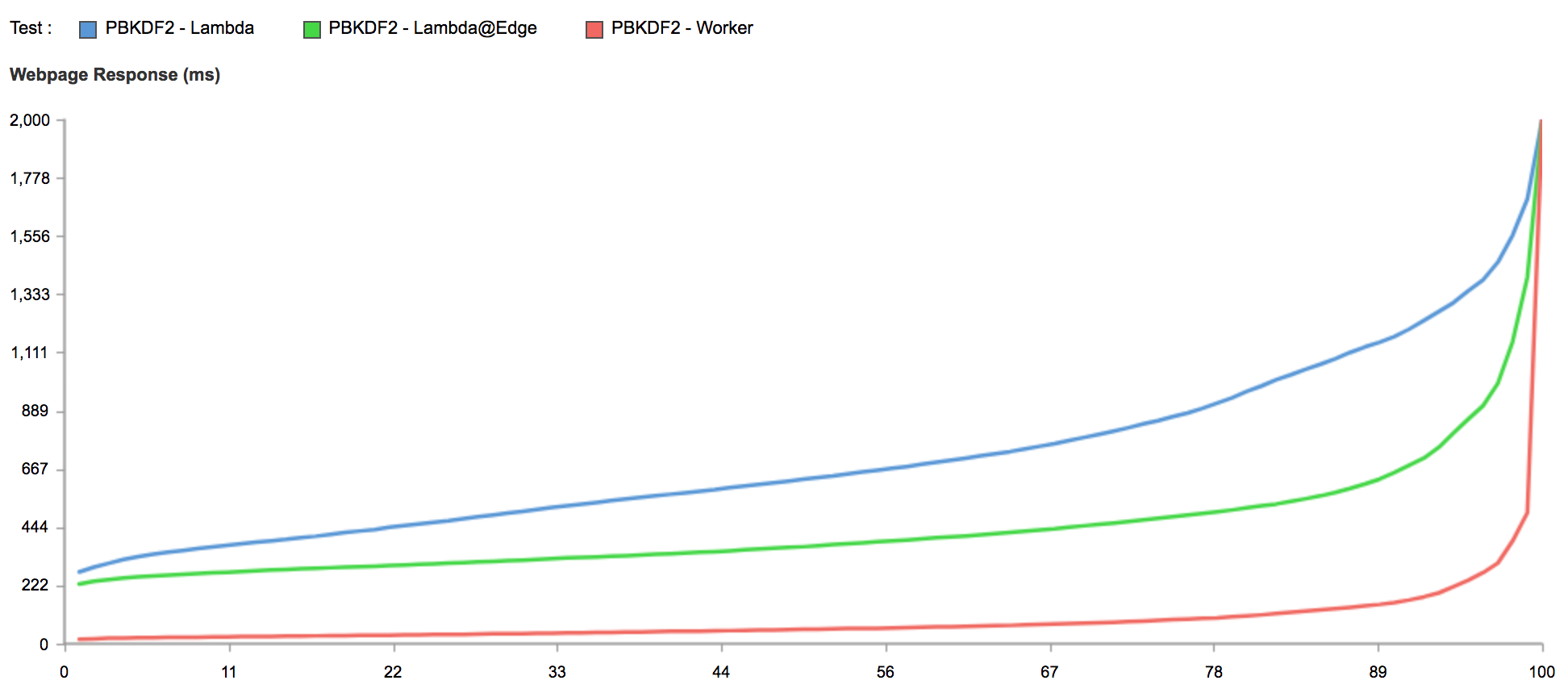

This chart shows what percentage of the requests made in the past 24 hours were faster than a given number of ms:

The 95th percentile speed of Workers is 242ms. Lambda@Edge is 842ms (2.4x slower), Lambda 1346ms (4.6x slower).

Lambdas are billed based on the amount of memory they allocate and the number of CPU ms they consume, rounded up to the nearest hundred ms. Running a function which consumes 800ms of compute will cost me $1.86 per million requests. Workers is $0.50/million flat. Obviously even beyond the cost, my users would have a pretty terrible experience waiting over a second for responses.

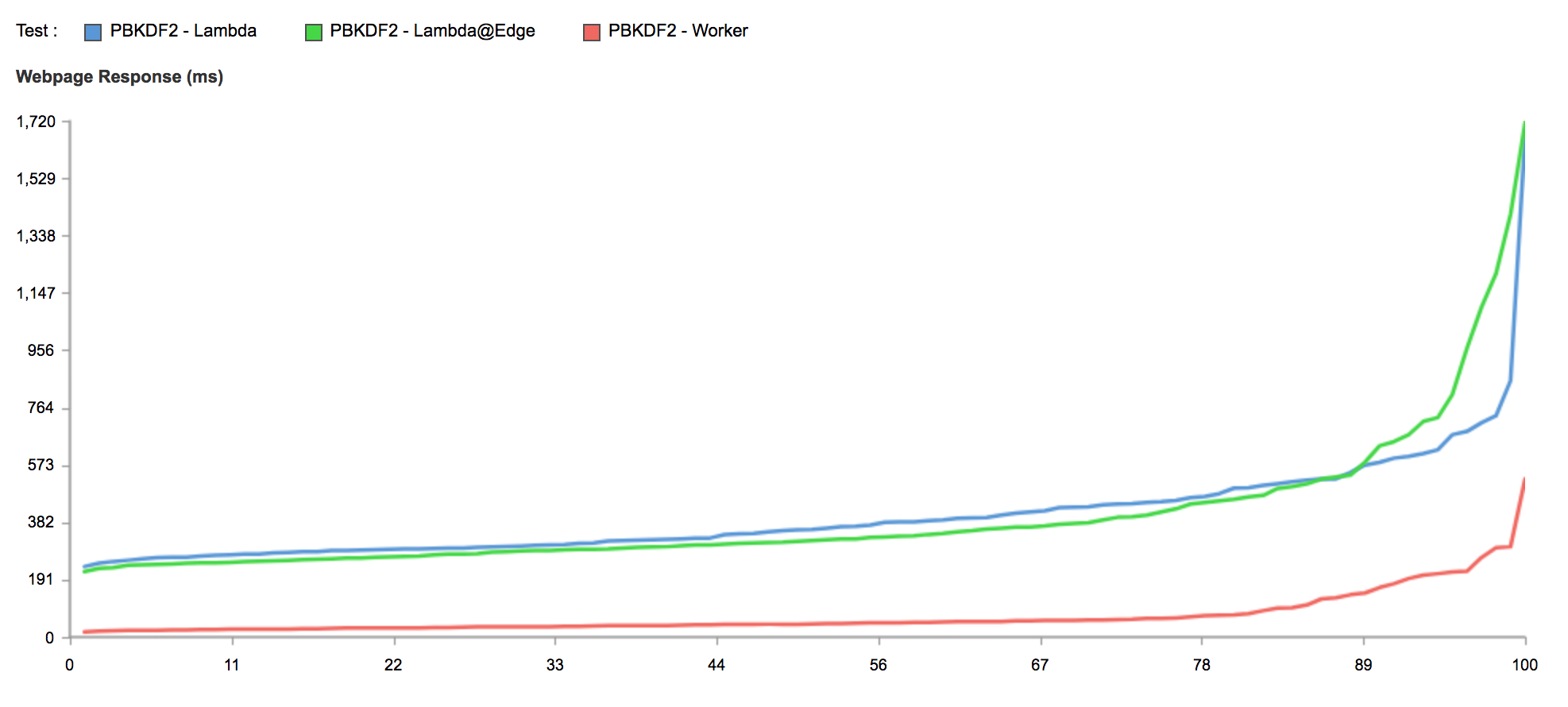

As I said, Workers run in almost 160 locations around the world while my Lambda is only running in Northern Virgina. This is something of an unfair comparison, so let's look at just tests in Washington DC:

Unsurprisingly the Lambda and Lambda@Edge performance evens out to show us exactly what speed Amazon throttles our CPU to. At the 50th percentile, Lambda processors appear to be 6-7x slower than the mid-range server cores in our data centers.

Memory

What you might not realize is that the power of the CPU your Lambda gets is a function of how much memory you allocate for it. As you ramp up the memory you get more performance. To test this we allocated a function with 1024MB of memory:

Again this are only tests emanating from Washington DC, and I have filtered points over 500ms.

The performance of both the Lambda and Lambda@Edge tests with 1024MB of memory is now much closer, but remains roughly half that of Workers. Even worse, running my new Lambda through the somewhat byzantine pricing formula reveals that 100ms (the minimum) of my 1024MB Lambda will cost me the exact same $1.86 I was paying before. This makes Lambda 3.7x more expensive than Workers on a per-cycle basis.

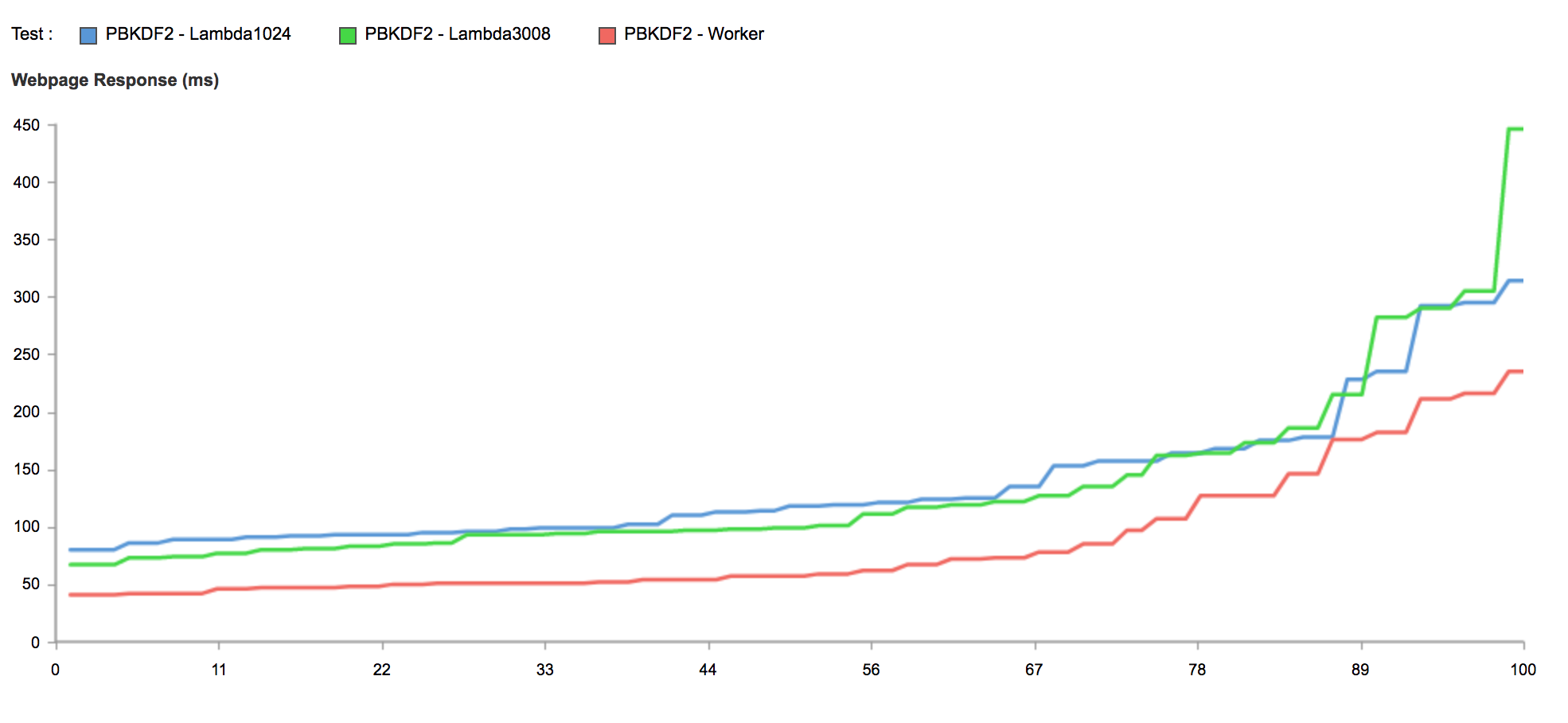

Adding more memory than that only adds cost, not speed. Here is a 3008MB instance (the max I can allocate):

This Lambda would be costing me $5.09 per million requests, over 10x more than a Worker, but would still provide less CPU.

In general our supposition is a 128MB Lambda is buying you 1/8th of a CPU core. This scales up as you elect to purchase Lambda's with more memory, until it hits the performance of one of their cores (minus hypervisor overhead) at which point it levels off.

Ultimately Amazon is charging based on the duration of execution, but on top of a much slower execution platform than we expected.

Native Code

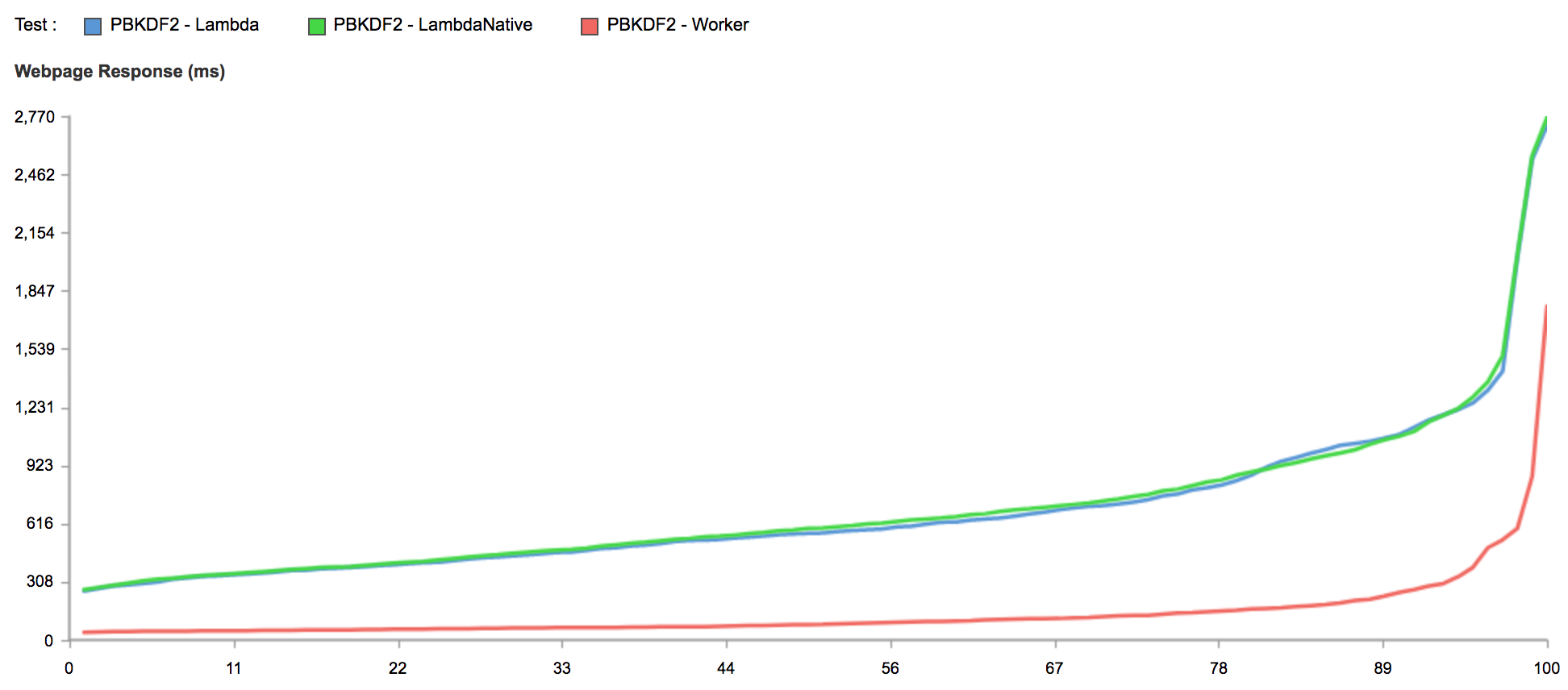

One response we saw to our last post was that Lambda supports running native binaries and that's where its real performance will be exhibited.

It is of course true that our Javascript tests are likely (definitely in the case of Workers) just calling out to an underlying compiled cryptography implementation. But I don't know the details of how Lambda is implemented, perhaps the critics have a point that native binaries could be faster. So I decided to extend my tests.

After beseeching a couple of my colleagues who have more recent C++ experience than I do, I ended up with a Lambda which executes the BoringSSL implementation of PBKDF2 in plain C++14. The results are utterly boring:

A Lambda executing native code is just as slow as one executing Javascript.

Java

As I mentioned, irrespective of the language, all of this cryptography is likely calling the same highly optimized C implementations. In that way it might not be an accurate reflection of the performance of our chosen programming languages (but is an accurate reflection of CPU performance). Many people still believe that code written in languages like Java is faster than Javascript in V8. I decided to disprove that as well, and I was willing to sacrifice to the point where I installed the JDK, and resigned myself to a lifetime of update notifications.

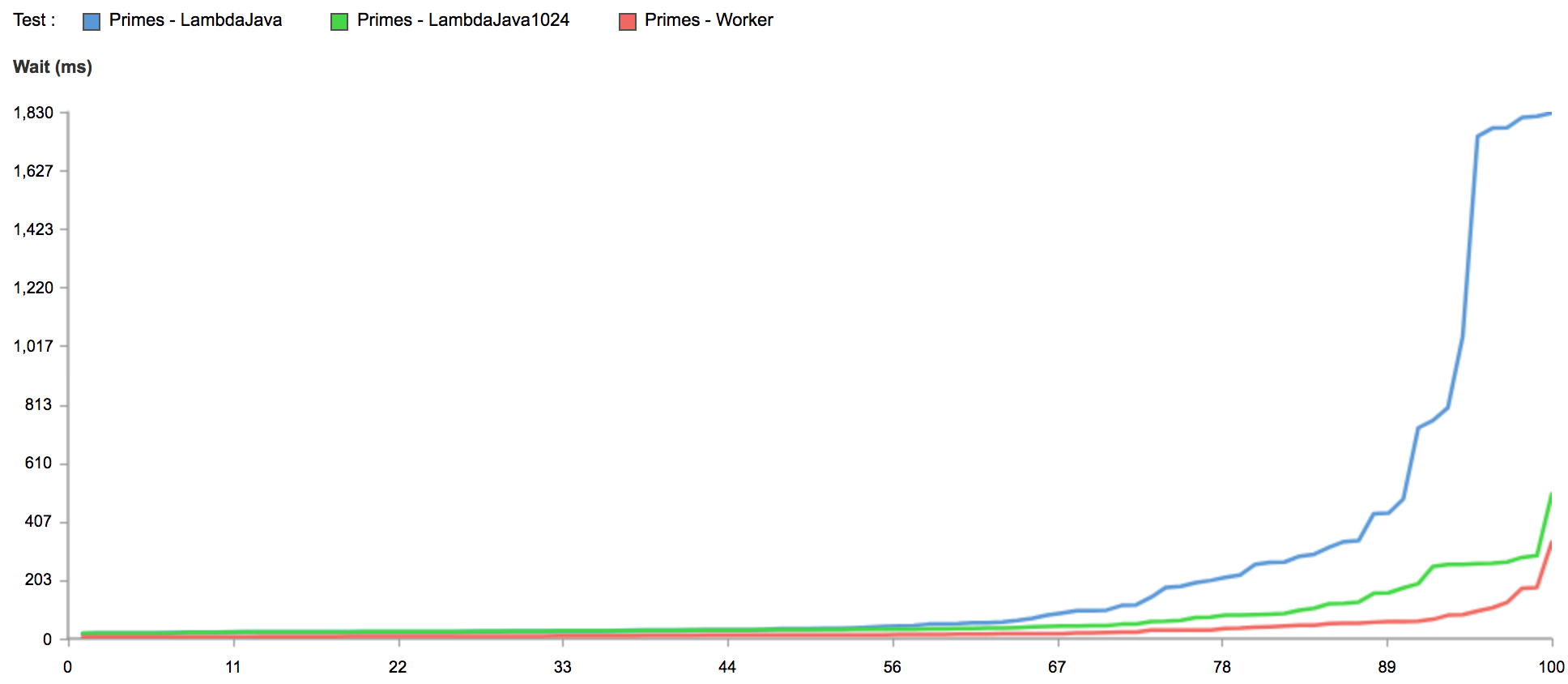

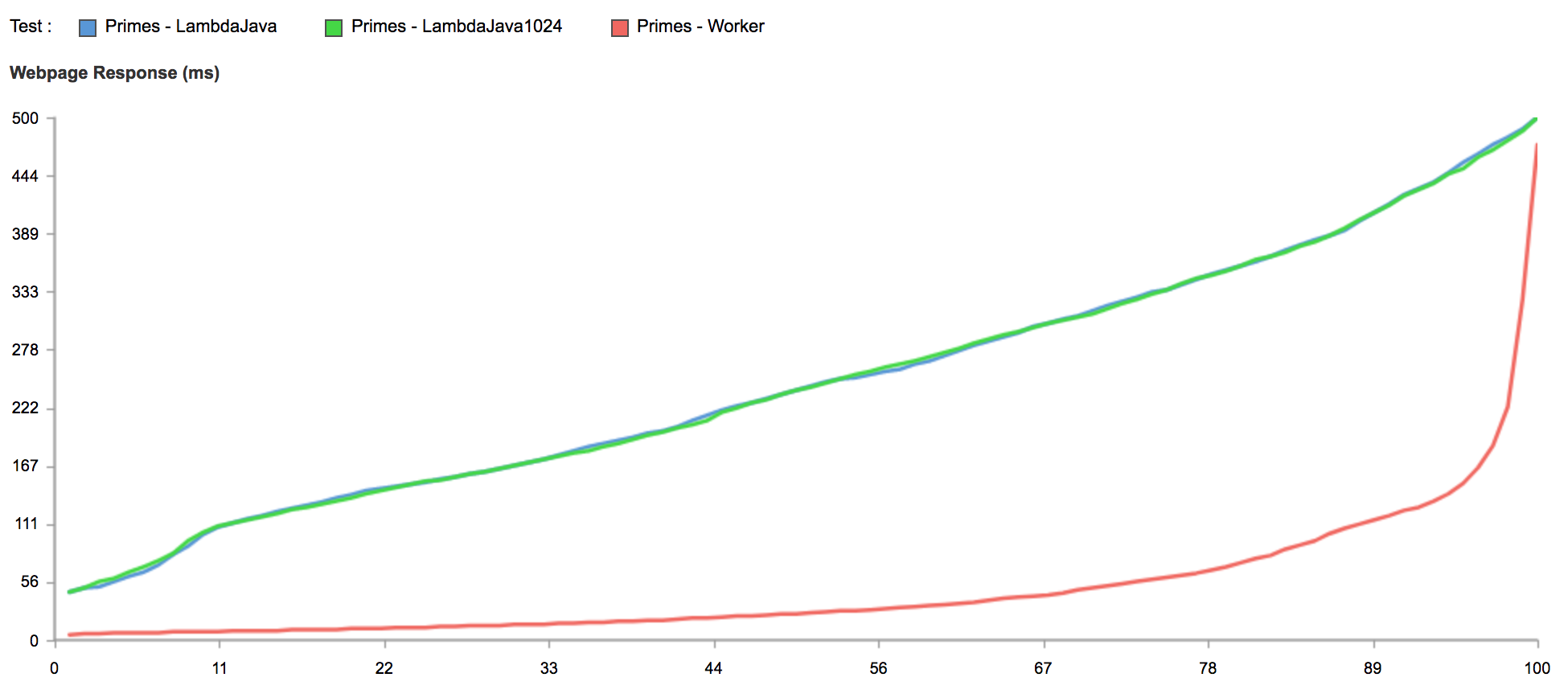

To test application-level performance I dusted off my interview chops and wrote a naïve prime factorization function in both Javascript and Java. I won't weigh in on the wisdom of using new vs old guard languages, but I will say it doesn't buy you much with Lambda:

This is charting two Lambdas, one with 128MB of memory, the other 1024MB. The tests are all from Washington, DC (near both of our Lambdas) to eliminate the advantage of our Worker's global presence. The 128MB instance has 1745ms of 95% percentile wait time.

When we look at tests which originate all around the globe, where billions of Internet users browse every day, we get the best example of the power of a Worker's deployment yet:

When we exclude the competitors from our analysis we are able to use the same techniques to examine the performance of the Workers platform itself and uncover more than a few opportunities for improvement and optimization. That's what we're focused on: making Workers even faster, more powerful, and easier to use.

As you might imagine given these results, the Workers team is growing. If you'd like to work on this or other audacious projects at Cloudflare, reach out.