At Cloudflare, we aim to make the Internet faster and safer for everyone. One way we do this is through caching: we keep a copy of our customer content in our 165 data centers around the world. This brings content closer to users and reduces traffic back to origin servers.

Today, we’re excited to announce a huge change in our how cache works. Cloudflare Workers now integrates the Cache API, giving you programmatic control over our caches around the world.

Why the Cache API?

Figuring out what to cache and how can get complicated. Consider an e-commerce site with a shopping cart, a Content Management System (CMS) with many templates and hundreds of articles, or a GraphQL API. Each contains a mix of elements that are dynamic for some users, but might stay unchanged for the vast majority of requests.

Over the last 8 years, we’ve added more features to give our customers flexibility and control over what goes in the cache. However, we’ve learned that we need to offer more than just adding settings in our dashboard. Our customers have told us clearly that they want to be able to express their ideas in code, to build things we could never have imagined.

How the Cache API works

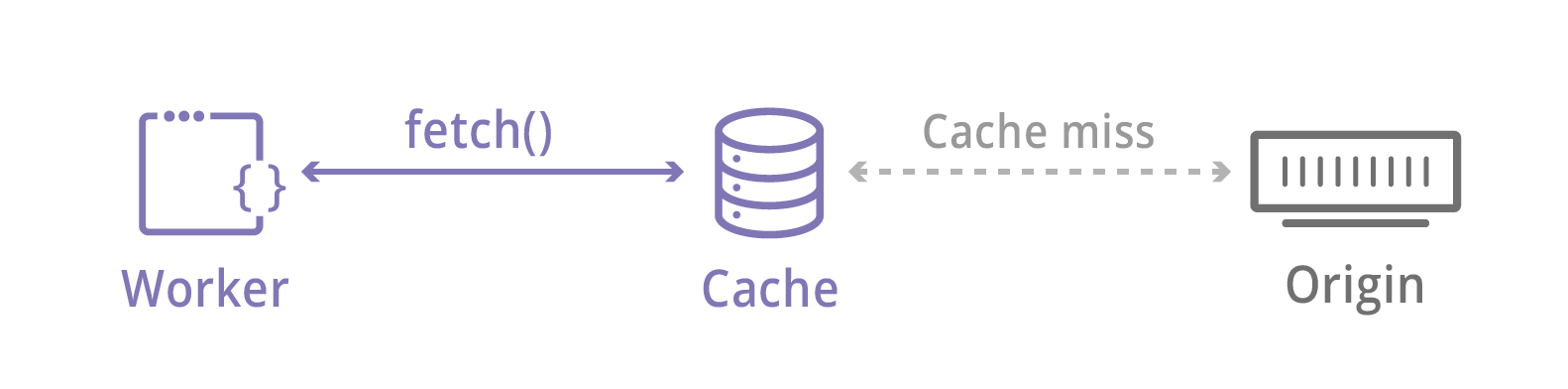

Fetching content is one of the most common Worker tasks. fetch() has always leveraged powerful Cloudflare features like Argo and Load Balancing. It also runs through our cache: we check for content locally before connecting to the Internet. Without the Cache API, content requested with fetch() goes in the cache as-is.

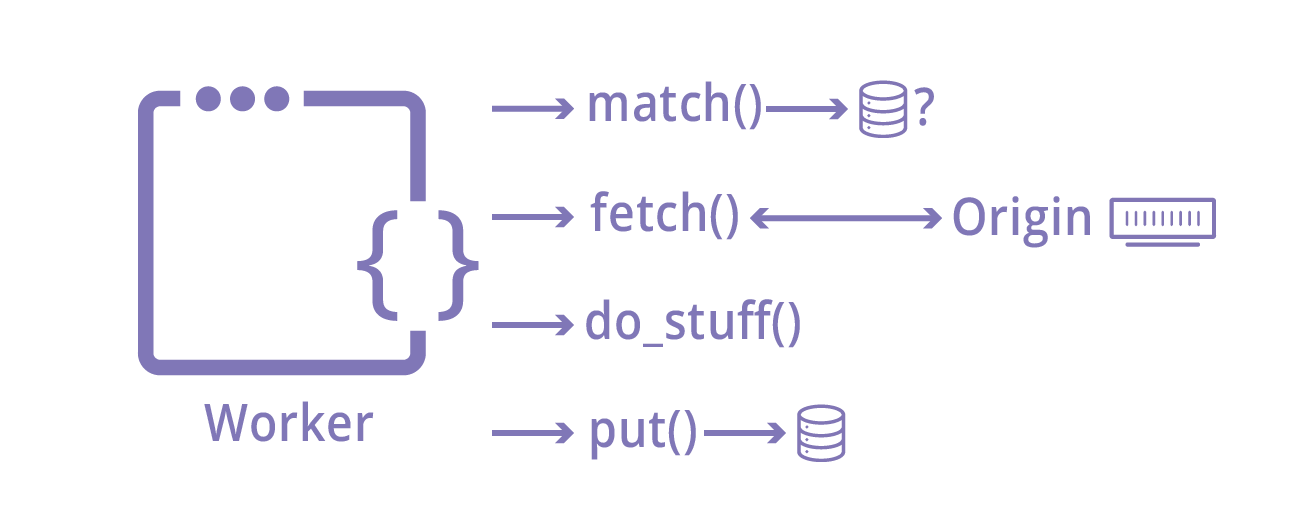

The Cache API changes all of that. It’s based on the Service Workers Cache API, and at its core it offers three methods:

- put(request, response) places a Response in the cache, keyed by a Request

- match(request) returns a given Response that was previously put()

- delete(request) deletes a Response that was previously put()

This API unleashes a huge amount of power. Because Workers give you the ability to modify Request and Response objects, you can control any caching behavior like TTL or cache tags. You can implement customer Vary logic or cache normally-uncacheable objects like POST requests.

The Cache API expects requests and responses, but they don't have to come from external servers. Your worker can generate arbitrary data that’s stored in our cache. That means you can use the Cache API as a general-purpose, ephemeral key-value store!

Case study: Using the Cache API to cache POST requests

Since we announced the beta in September, usage of the Cache API has grown to serve thousands of requests per second. One common use-case is to cache POST requests.

Normally, Cloudflare does not consider POST requests to be cacheable because they are designed to be non-idempotent: that is, they change state on the server when a request is made. However, applications can also use POST requests to send large amounts of data to the server, or as a common HTTP method for API calls.

Here’s what one developer had to say about using the Cache API:

We needed to migrate some complex server side code to the edge. We have an API endpoint that accepts POST requests with large bodies, but mostly returns the same data without changing anything on our origin server. With Workers and the Cache API, we were able to cache responses to POST requests that we knew were safe, and reduce significant load on our origin.

— Aaron Dearinger, Edge Architect, Garmin International

Caching POST requests with the Cache API is simple. Here’s some example code from our documentation:

async function handleRequest(event) {

let request = event.request

let response

if (request.method == 'POST') {

let body = await request.clone().text()

let hash = await sha256(body)

let url = new URL(request.url)

url.pathname = "/posts" + url.pathname + hash

// Convert to a GET to be able to cache

let cacheKey = new Request(url, {

headers: request.headers,

method: 'GET'

})

let cache = caches.default

// try to find the cache key in the cache

response = await cache.match(cacheKey)

// otherwise, fetch from origin

if (!response) {

// makes POST to the origin

response = await fetch(request.url, newRequest)

event.waitUntil(cache.put(cacheKey, response.clone()))

}

} else {

response = await fetch(request)

}

return response

}

async function sha256(message) {

// encode as UTF-8

const msgBuffer = new TextEncoder().encode(message)

// hash the message

const hashBuffer = await crypto.subtle.digest('SHA-256', msgBuffer)

// convert ArrayBuffer to Array

const hashArray = Array.from(new Uint8Array(hashBuffer))

// convert bytes to hex string

const hashHex =

hashArray.map(b => ('00' + b.toString(16)).slice(-2)).join('')

return hashHex

}

Try it yourself

Already in our beta, we’ve seen customers use the Cache API to dynamically cache parts of GraphQL queries and store customer data to improve performance. We’re excited to see what you build! Check out the Cloudflare Workers getting started guide and the Cache API docs, and let us know what you’ve built in the Workers Community forum.